Open Ollama, type ollama list and see hundreds of models available to pull. Llama, Qwen, DeepSeek, Gemma, Phi, Mistral… each with various sizes 1B, 7B, 14B, 70B. Not to mention quantization q4, q8, fp16. Beginners looking at this would definitely be overwhelmed.

This article will help you understand each popular model family in 2025, compare them with each other, and most importantly: choose which model suits your VPS configuration.

How to Read Model Names in Ollama

Before diving into comparisons, you need to know how to read model names. For example:

qwen2.5:7b-instruct-q4_K_MThis name consists of 4 parts:

- qwen2.5 — Model family (model series, developed by Alibaba)

- 7b — Number of parameters (7 billion parameters). The larger the number, the “smarter” the model but also the more RAM it consumes

- instruct — Variant that has been fine-tuned for chat/instruction. If not present, it’s a base model (used for further training, not for chat)

- q4_K_M — Quantization level. This is a compression technique to reduce RAM usage. q4 = 4-bit (lightest), q8 = 8-bit (balanced), fp16 = full precision (heaviest, highest quality)

Tip: When using Ollama, the default pull will be the q4_K_M version. This is a well-balanced quantization level between quality and RAM usage. You don’t need to change anything unless you have special requirements.

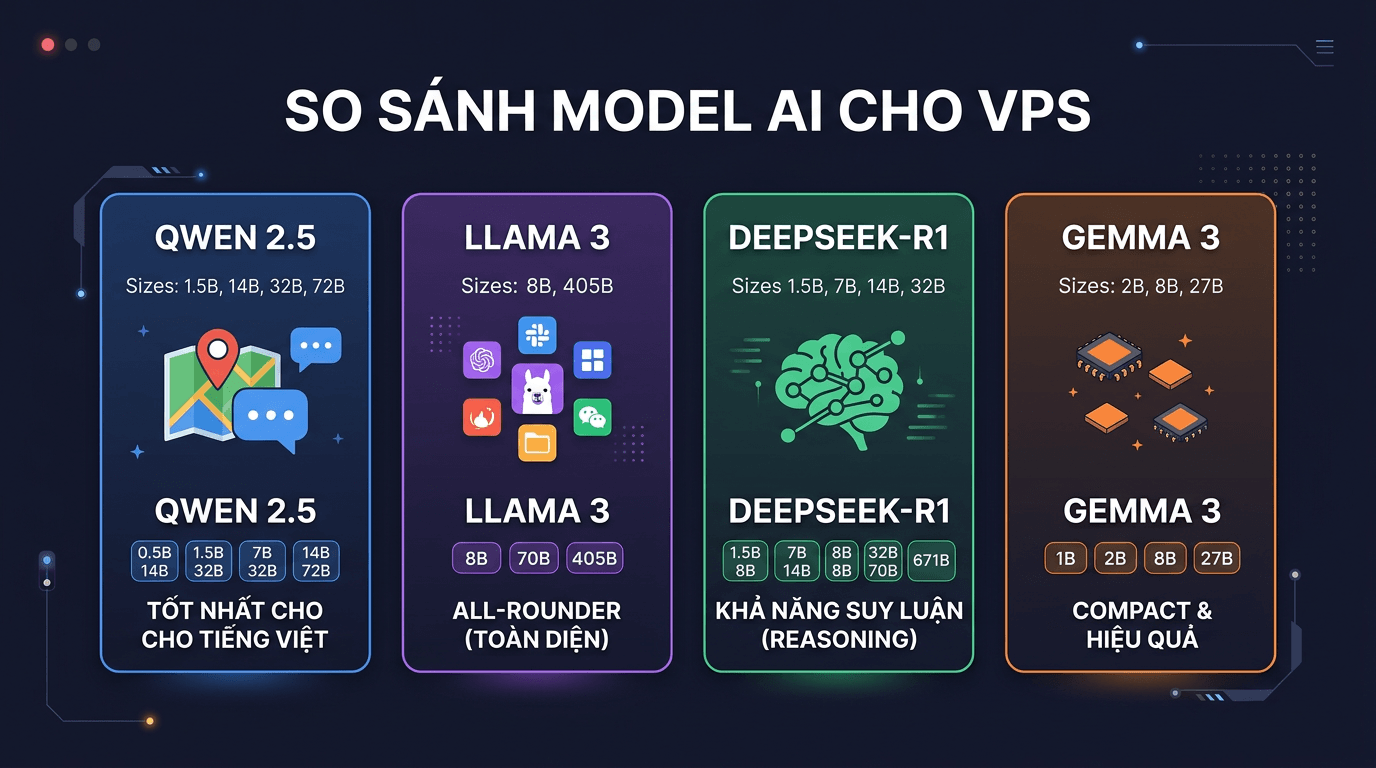

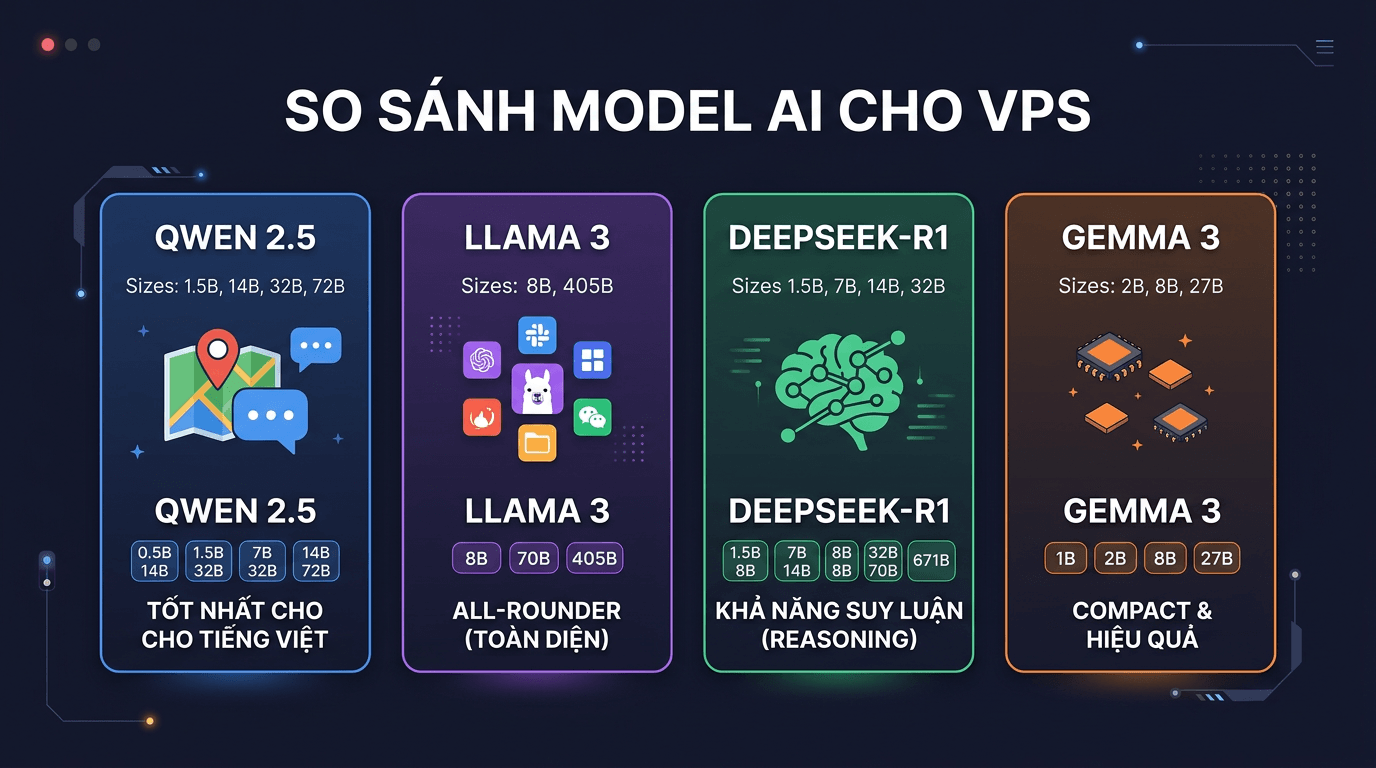

Most Popular Model Families Currently

Below are the 6 model families you’ll encounter most when self-hosting AI on VPS.

Qwen 2.5 (Alibaba)

This is the model family I recommend most for Vietnamese users. Qwen 2.5 was trained by Alibaba with a very large multilingual dataset, including Vietnamese. The result is that it understands and responds in Vietnamese much better than other models of the same size.

- Size: 0.5B, 1.5B, 3B, 7B, 14B, 32B, 72B

- License: Apache 2.0 (comfortable for commercial use)

- Strengths: Excellent Vietnamese, has dedicated Coder version for programming, diverse sizes

- Weaknesses: Reasoning not as good as DeepSeek-R1 at the same size

Llama 3.x (Meta)

Llama is the “national” model family of the open-source AI community. Meta invested heavily and the community support is also the strongest. Most tools, frameworks, and tutorials use Llama as the default model.

- Size: 1B, 3B, 8B, 70B, 405B

- License: Llama Community License (free for most use cases, restricted if over 700 million MAU)

- Strengths: All-rounder, large community, abundant documentation, stable

- Weaknesses: Vietnamese not as good as Qwen, 70B+ versions are very heavy

DeepSeek-R1 (DeepSeek)

DeepSeek-R1 is a model specialized in reasoning. It uses chain-of-thought, meaning the model will “think step by step” before giving an answer. Very suitable for logic problems, analysis, and complex programming.

- Size: 1.5B, 7B, 8B, 14B, 32B, 70B (distill versions)

- License: MIT

- Strengths: Superior reasoning, good coding, precise logical inference

- Weaknesses: Longer output (due to chain-of-thought), slower than regular models of the same size

Gemma 3 (Google)

Google joined the open-weight game with Gemma. The highlight is very good quality for small sizes. If you have a low-configuration VPS but still want stable quality, Gemma is worth trying.

- Size: 1B, 4B, 12B, 27B

- License: Gemma License (similar to Apache, allows commercial use)

- Strengths: Compact, high efficiency for size, multimodal (image support)

- Weaknesses: Average Vietnamese, few size choices

Phi-3.5 / Phi-4 (Microsoft)

Microsoft focuses on the “small but mighty” approach. Phi-3.5 only has 3.8B parameters but beats many 7B models in benchmarks. If you only have a 4GB RAM VPS, this is a very worth considering choice.

- Size: 3.8B (Phi-3.5), 14B (Phi-4)

- License: MIT

- Strengths: Extremely light, high quality for size, MIT license

- Weaknesses: Few size choices, limited Vietnamese, smaller community

Mistral / Mixtral (Mistral AI)

French startup famous for Mixture of Experts (MoE) architecture. Mixtral 8x7B has 47B total parameters but only activates 13B per inference, so it’s fast while maintaining high quality.

- Size: 7B (Mistral), 8x7B / 8x22B (Mixtral)

- License: Apache 2.0

- Strengths: Fast, efficient MoE architecture, good coding

- Weaknesses: Mixtral needs a lot of RAM (though inference is fast), average Vietnamese

Overall Comparison Table

The table below compares models at the most popular sizes (7B-14B), running quantization q4_K_M on CPU:

| Model | Size | RAM needed (q4) | License | Vietnamese | Coding | Reasoning |

|---|---|---|---|---|---|---|

| Qwen 2.5 7B | 7B | ~5.5 GB | Apache 2.0 | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Llama 3.1 8B | 8B | ~6 GB | Llama License | ⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| DeepSeek-R1 8B | 8B | ~6 GB | MIT | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Gemma 3 12B | 12B | ~8 GB | Gemma License | ⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Phi-3.5 | 3.8B | ~3 GB | MIT | ⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ |

| Mistral 7B | 7B | ~5.5 GB | Apache 2.0 | ⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐ |

| Qwen 2.5 14B | 14B | ~10 GB | Apache 2.0 | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| DeepSeek-R1 14B | 14B | ~10 GB | MIT | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

Choosing Models by VPS Configuration

This is the most important part. No matter how good a model is, if your VPS doesn’t have enough RAM, it’s useless. Below are specific suggestions for each configuration level:

4GB RAM VPS: Works, but Choose Carefully

With 4GB RAM, you need to reserve about 1GB for the operating system and Ollama, leaving ~3GB for the model. Best choices:

- Qwen 2.5 3B (q4_K_M) ~ 2.3 GB: Best Vietnamese quality in this segment

- Phi-3.5 3.8B (q4_K_M) ~ 2.8 GB: Higher benchmarks but weaker Vietnamese

- Gemma 3 1B (q4_K_M) ~ 1 GB: Extremely light, suitable for simple tasks

8GB RAM VPS: Sweet Spot for Beginners

8GB RAM is the level I recommend starting with if you’re serious about self-hosting AI. You can run most 7-8B models with good quality:

- Qwen 2.5 7B (q4_K_M) ~ 5.5 GB: #1 choice if using Vietnamese

- Llama 3.1 8B (q4_K_M) ~ 6 GB: All-rounder, abundant documentation and community

- DeepSeek-R1 8B (q4_K_M) ~ 6 GB: If you need strong reasoning

- Gemma 3 4B (q4_K_M) ~ 3 GB: Light, saves RAM for other applications

16GB RAM VPS: Step Up to a New Level

With 16GB, you unlock 14B models. This is where output quality starts to be truly impressive:

- Qwen 2.5 14B (q4_K_M) ~ 10 GB: Best pick. Vietnamese + coding + reasoning all good

- DeepSeek-R1 14B (q4_K_M) ~ 10 GB: Extremely strong reasoning at this level

- Gemma 3 12B (q4_K_M) ~ 8 GB: Lighter, runs faster

32GB+ RAM VPS: Running Large Models

32GB RAM allows running 32B models on CPU, but speed will be slow. If you want a smooth experience at this size, you should have a GPU:

- Qwen 2.5 32B (q4_K_M) ~ 22 GB: Quality close to commercial models

- DeepSeek-R1 32B (q4_K_M) ~ 22 GB: Reasoning rivals much larger models

- Gemma 3 27B (q4_K_M) ~ 18 GB: Good, slightly lighter

Note: The RAM numbers above are estimates for q4_K_M quantization models running on CPU. Actual usage may vary depending on context length and operating system. You should reserve at least 1-2 GB RAM for the system.

Choosing Models by Use Case

Besides hardware configuration, you should also choose models based on your main usage purpose:

Chat and Vietnamese Processing → Qwen 2.5

If you need a chatbot that responds in Vietnamese, writes Vietnamese content, or processes Vietnamese documents, then Qwen 2.5 is the clearest choice. At every size from 3B to 72B, Qwen’s Vietnamese capabilities are superior to competitors in the same class.

Programming / Coding → DeepSeek or Qwen 2.5 Coder

Need AI to help write code? Two top choices:

- DeepSeek-R1: Good logical reasoning, great debugging, understands complex problems

- Qwen 2.5 Coder: Specialized version for coding, supports many programming languages, has 7B version that runs comfortably on 8GB VPS

Reasoning and Analysis → DeepSeek-R1

Logic problems, data analysis, explaining complex issues? DeepSeek-R1 with chain-of-thought will give higher quality output. In return, responses will be longer and slower because the model needs to “think” before answering.

General Purpose / Multi-use → Llama 3.1

If you don’t have specific needs and want a model that can do everything reasonably well, Llama 3.1 8B is a safe choice. Large community, abundant documentation, easy to find solutions when encountering errors.

Real Test: Same Prompt, 4 Models Respond Differently

I tested with the same Vietnamese prompt on 4 popular models (all around 7-8B size, q4_K_M quantization) so you can see the differences more clearly:

Prompt: “Explain how DNS works in simple language, about 3-4 sentences.”

Qwen 2.5 7B: Natural response, correct Vietnamese grammar, clear explanation. No vocabulary errors or awkward sentences. This is output you can use immediately without much editing.

Llama 3.1 8B: Correct content but Vietnamese phrasing sounds a bit “machine translated”. Some phrases don’t sound natural. Still usable but needs review.

DeepSeek-R1 8B: Very detailed response, with long reasoning section before giving the main answer. Good content quality but much longer response than requested. Vietnamese at decent level.

Gemma 3 4B: Short and to the point. Vietnamese somewhat stiff but acceptable for a 4B model. Fastest response among the 4 models.

Conclusion: Which Model Should You Choose?

After testing and comparing, here are my recommendations:

- Just starting, want simplicity: Just

ollama pull qwen2.5:7band use it. This is the best choice for most Vietnamese users. - Weak VPS (4GB RAM):

ollama pull qwen2.5:3borollama pull phi3.5 - Need coding assistant:

ollama pull qwen2.5-coder:7borollama pull deepseek-r1:8b - Want highest quality (16GB RAM):

ollama pull qwen2.5:14b - Want to try multiple models: Just pull 2-3 models and test with the same prompt. Ollama allows easy model switching, no need to commit to any single model.

Tip: You can install multiple models simultaneously on Ollama. Just need enough disk space. Ollama only loads models into RAM when you actually use them, and automatically frees up after a few minutes of non-use.

The AI model world is developing extremely fast. Every few months there are new, better models. But with what’s currently available, Qwen 2.5 is the most comprehensive choice for Vietnamese users self-hosting AI on VPS. Start with it, then try other models when you get familiar.

You might also like

- Running DeepSeek on VPS without GPU - Detailed guide with Ollama

- What is Gemma 4? Google most powerful open AI model running from phones to servers

- Installing Ollama on VPS Ubuntu - Run Private AI in 15 Minutes

- n8n + Ollama - Automate Workflows with AI Running on Your Own VPS

- What is vLLM? When should you use vLLM instead of Ollama

- Install Open WebUI + Ollama with Docker Compose - Create Your Own ChatGPT on VPS

About the author

Trần Thắng

Expert at AZDIGI with years of experience in web hosting and system administration.